This post will try to outline some performance optimization tips for virtual hardware within VMware infrastructure. Performance is one of the topics which is crucial. How far can you go to optimize each and every VM within your infrastructure? There are few guidelines from VMware, we'll focus on optimization of Virtual machine hardware, the VM's configuration.

It is possible to pick different components for your VM depending on the OS types you're using (Linux, Windows), your real hardware config and also for example, your network configuration (using vSphere standard switch (VSS) or using vSphere Distributed Switch (VDS).

By optimizing your VM's virtual hardware you can achieve significant “savings” which as a result shall if not having faster workloads, resulting in less CPU usage, which can result perhaps in higher consolidation ratios.

Virtual Machine Hardware (VMX) performance optimization tips

The latest version of Virtual Hardware is version 13 (vmx-13) which has been introduced in VMware vSphere 6.5. The best is to create a VM template if you deploying VMs often. Here are few guidelines on creating VM with a minimal set of hardware or with hardware which may be more efficient on you ESXi host's CPU.

Disconnect or disable any physical hardware devices that you will not be using. Some old devices or devices you know that you will not use, such as:

- COM ports

- LPT ports

- USB controllers

- Floppy drives

- Optical drives (that is, CD or DVD drives)

- Network interfaces

- Storage controllers

Quote from VMware:

Disabling hardware devices (typically done in virtual machine BIOS) can free interrupt resources. Additionally, some devices, such as USB controllers, operate on a polling scheme that consumes extra CPU resources. Lastly, some PCI devices reserve blocks of memory, making that memory unavailable to ESXi.

Floppy, do you need it?

You no longer use floppy within VMs, right? So remove it from your VM's hardware or better yet, disable it within the BIOS of the VM.

Virtual Storage Controler type

Depending on your OS type and storage array, you can perhaps use a new virtual NVMe device (only for All Flash SAN/vSAN environment) which provides 30-50% lower CPU cost per I/O and 30-80% higher IOPS compared to virtual SATA devices.

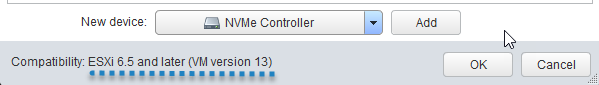

The Add New device wizard when adding a new virtual hardware to a VM which has Virtual Hardware 13 configured (vmx-13).

If not you can still use VMware Paravirtual storage controller type PVSCSI, which offers lower CPU usage.

PVSCSI adapters are supported for boot drives in only some operating systems. For additional information see VMware KB article 1010398.

Quote from VMware:

PVSCSI and LSI Logic Parallel/SAS are essentially the same when it comes to overall performance capability. PVSCSI, however, is more efficient in the number of host compute cycles that are required to process the same number of IOPS. This means that if you have a very storage IO intensive virtual machine, this is the controller to choose to ensure you save as many cpu cycles as possible that can then be used by the application or host. Most modern operating systems that can drive high IO support one of these two controllers.

Here’s a detailed whitepaper

Virtual Network Card Type

Virtual network adapters or network interface cards (vNICS) assure connectivity of your VMs with an external world. When creating new VM, it’s important to choose the right OS from the list as VMware picks the right adapter type. If you have specific OS or running specific (ex. Low-latency) application you might need other, then the default vNIC.

I have recently published a post listing all different options. Check it out.

Tip:

When networking two virtual machines on the same ESXi host, try to connect them to the same vSwitch. When connected this way, their network speeds are not limited by the wire speed of any physical network card. Instead, they transfer network packets as fast as the host resources allow.

Virtual CPU, Cores, and Options

“Start small, add more, if needed.”…

Even if the guest operating system doesn’t use some of its vCPUs, configuring virtual machines with those vCPUs still imposes some small resource requirements on ESXi that translate to real CPU consumption on the host.

Further checks should be done for NUMA node sizes, CPU cache locality, and workload implementation details.

Let's say you're asking yourself questions about virtual CPUs and your VM. While vSphere 6.5 allows you to assign up to 128 virtual CPUs to your VM, but in reality, you're most likely limited by your hardware (not by vSphere) and most importantly, by adding too many vCPUs, you might actually be introducing a bottleneck, because the VM won't run faster, but slower.

Quote:

Configuring a virtual machine with more virtual CPUs (vCPUs) than its workload can use might cause slightly increased resource usage, potentially impacting performance on very heavily loaded systems. Common examples of this include a single-threaded workload running in a multiple-vCPU virtual machine or a multi-threaded workload in a virtual machine with more vCPUs than the workload can effectively use.

Quote:

A virtual machine cannot have more virtual CPUs than the number of logical cores on the host. The number of logical cores is equal to the number of physical cores if hyperthreading is disabled or two times that number if hyperthreading is enabled.

Virtual Memory

Again. Start small, increase vRAM later.

If a VM has too much RAM configured and not using it and ESXi starts to be overcommitted on RAM, the ESXi might start ballooning. However, the performance impact of over-allocating memory is far less than under-allocating it. You should avoid over-allocating or under-allocate RAM.

Quote:

Over-allocating memory also unnecessarily increases the virtual machine memory overhead. While ESXi can typically reclaim the over-allocated memory, it can’t reclaim the overhead associated with this over-allocated memory, thus consuming memory that could otherwise be used to support more virtual machines.

The PDF I'm referring to is a reference pdf called Performance Best Practices for VMware vSphere 6.5 and it allows IT admins to maximize the performance of VMware vSphere 6.5. Within my post I'm covering only a small part present within this document.

Source: VMware Performance best practices PDF

More from ESX Virtualization:

- What is VMware DRS (Distributed Resource Scheduler)?

- What is VMware vMotion?

- VMware vSphere Essentials Plus Kit Term

- What is VMware Enhanced vMotion Compatibility (EVC)

- Deploy VMware VCSA 6.5 in VMware Workstation 2017 Tech Preview

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)

On disabling USB controllers – can’t you possibly kill the mouse & keyboard? How do you know which is which?

Good question. Only if you’re using USB passthrough from your ESXi host (when you have a USB device connected to a host, and passing it through to your VM). For this case, you’ll need USB adapter installed with your VMs hardware.