New test of PVSCSI adapter compared to LSI Logic SAS.

Few weeks back I pointed out a VMware KB article surrounding a question when to use PVSCSI. If it's good idea or not using it for VMs with lower load or not. The article was burried here in the quantity of posts since…

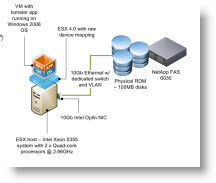

Today I saw a new blog post on VMware VROOM blog talking about new whitepaper released, when they actually did some good tests with both adapters (LSI logic SAS and PVSCSI) and compare the results on FC and or 10Gigs iSCSI.

Today I saw a new blog post on VMware VROOM blog talking about new whitepaper released, when they actually did some good tests with both adapters (LSI logic SAS and PVSCSI) and compare the results on FC and or 10Gigs iSCSI.

Since the throughput results were in favour only for High load VMs. The benefit of using PVSCSI adapter there were quite remarkable, on the lower loads there are almost no differences.

All the tests were conducted with high load configured with IOmeter – a popular testing tool available from Sourceforge.

For Fibre channel:

The results for fibre channel PVSCSI is 18%, 13%, and 7% better than LSI Logic in throughput with 1KB, 2KB, and 4KB I/O block sizes.

PVSCSI reduces the CPIO by 10%~30% compared to LSI Logic there. (CPIO = CPU efficiency measures how many cycles the CPU has to spend to process a single I/O request, which is measured in a unit of cycles per I/O (CPIO)).

On ISCSI:

they used 10 Gigs iSCSI network with standard MTU 1500 (no Jumbo frames configuration). PVSCSI outperforms LSI Logic by up to 9% in the 1KB I/O block size.

Concerning the CPIO they did a conclusion that PVSCSI reduces the CPIO by 1%~25% compared to LSI Logic.

For the low load VMs (under 2,000 IOPS) the PVSCSI driver is not suitable because of higher latency.

There is Higher latency than LSI Logic if the workload drives low I/O rates. This is because the ESX 4.0 design of PVSCSI coalesces based on outstanding I/Os and not throughput. So when the virtual machine is requesting a lot of I/O but the storage is not delivering it, the PVSCSI driver is coalescing interrupts.

The PVSCSI uses coalescing. Coalescing? What's that? Explanation from Scott Sauer:

Currently the PVSCSI driver coalesces based on OIOs only, and not throughput. This means that when the virtual machine is requesting a lot of IO but the storage is not delivering, the PVSCSI driver is coalescing interrupts. But without the storage supplying a steady stream of IOs there are no interrupts to coalesce. The result is a slightly increased latency with little or no efficiency gain for PVSCSI in low throughput environments.

There will be an update for the PVSCSI driver functionality to make it as efficient as the LSI logic on lower workloads, but in the future releases of VMware vSphere.

For now and all my VMs I'll be using LSI logic unless some High load VMs…

Source: VROOM