vMotion – did you ever think how it works in details?

vMotion I knew how it works. At least up to today. But then I discovered this video from VMware, which goes really deep with this really detailed session. And you'll see that it's even more amazing that you have thought before. The all video is 47 minutes long, so watch this only if you got enough time. It's worthy. The speaker is Sreekanth Setty from VMware R&D, and Gabriel Tarasuk-Levin – VMware R&D.

The video comes from the VMworld session – VSP2122 VMware vMotion in VMware vSphere 5.0: Architecture, Performance and Best Practices – and it's been uploaded to YouTube by VMworldTV

vMotion is used by vMotion, FT, DPM, DRS…. most of the VMware technologies..

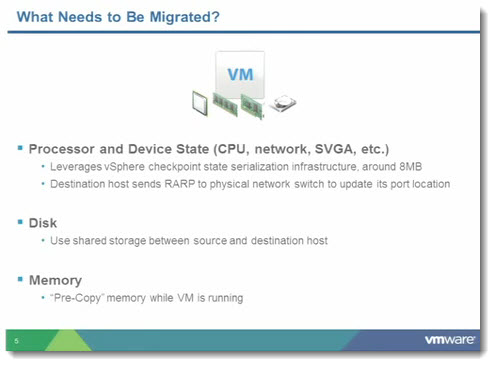

Basically, vMotion works the way that the VM which is moved by vMotion, the VMDK disk files must lay on a shared storage which is accessible from both hosts – the source host and the destination host. Then there is a release of the ownership of those VMDK files on the source host and then open the same VMDK on the destination host.

The moving process is handled by something that's called a “checkpoint infrastructure” – which I never heard of, but I was curious on so I just kept watching…

The video goes really deep, and as an average VMware admin you won't need to have such a deep knowledge of vMotion process, but if you're curious on how it really works…. just keep watching.

The memory handling – simple way (the naive way):

The VM gets quiesced at the source host > compute the device checkpoint > sent it to the destination > copy of the memory state from the source host to the destination host > resume the destination VM

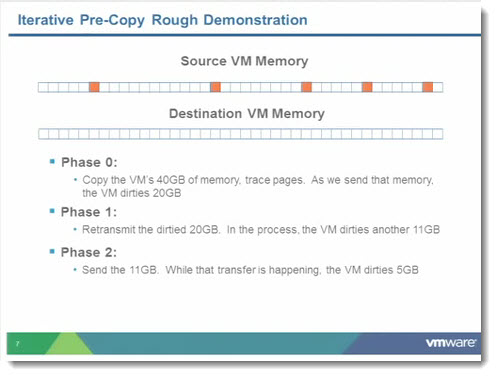

But in final it's more complicated, because to move 64Gigs of RAM via 10 Gigs Ethernet one would need 50 seconds. – which is too long. So how it's done?

It's showed on the graphics from the video. In fact there is a first copy of the memory state, but the source VM continues to run (so no interruption for users there), and then the deltas of the memory pages which changes are copied (by doing several passes).

They're using terms like “dirty memory pages” or “dirty pages”, “cold pages”…

A quick quote from the YouTube page:

The new and improved VMware vMotion in VMware vSphere 5.0 incorporates a number of enhancements that leverage the power of the solid state drive and 10 gigabit Ethernet technologies that are increasingly adopted in today's datacenters. These enhancements vastly improve both the usability and performance of vMotion. Testing in VMware performance labs shows it is easier than ever to use vMotion to manage even large virtual machines running heavy-duty, enterprise-class applications with minimal overhead. This session will describe the vMotion architecture and the new and enhanced features of vMotion in vSphere 5.0. Performance implications with data from a wide variety of tier 1 application workloads will also be discussed. We will share common pitfalls as well as best practices that enable you to get the maximum benefit from the new and improved vMotion technology.

There is a lot of new stuff which I learned. Also, the vMotion handling changed in each version of ESX. And also in ESXi 5 the vMotion process has been improved.

– multiple vMotion NICs – up to 16 NICs !!!! (I'm not sure if the configuration with 16 NICs are really supported…)

– SDPS (stun during page-send) which brings more control into the monitoring of the vMotion process

The whole process is described in this video. There is a compare to vSphere 4.1 as well. On one graphic I could see 37% of improvement in time duration between vSphere 4.1 and vSphere 5, which is considerable.

The best practices?

– switch to 10 GbE for vMotion network if you can

– When using multiple 10 GbE for vMotion, configure them all on one vSwitch

– Don't use the local SSD to place VM swap files, since it can impact the vMotion performance.

– Metro vMotion – (vMotion on long latency networks … 10 ms).

But there is more….

Enjoy…-:)

Source: VMwareKB

Hi Vladan,

according to the slides the speakers are:

– Sreekanth Setty, VMware R&D, VMware, Inc.

– Gabriel Tarasuk-Levin, VMware R&D, VMware, Inc.

Thanks, I’ll update the post.

Vladan

Excellent explaination of vmotion technology. Keep up the great work Vladan.

Hello there, how big does vmotion vmkernel port ip adress range needs to be for lets says 200 VMs. does a VM for vmotion always need to get an ip adress from this range or how is this done?