Another must have document if your're into VSAN. Again, this document called VMware Virtual SAN Diagnostics and Troubleshooting Reference Manual, which is written by Cormac Hogan (VMware) but this time it does not has 80 pages, but 295! It's not a simple PFD but rather a book which you can download for free.

It's very good document, again, like the VMware Virtual SAN 6.0 Design And Sizing Guide that I detailed in my previous post. The author teaches you how to check if disk controller (with its firmware) is on VSAN HCL ( hardware compatibility list), which CLI commands you need to pass to get the information (firmware levels) etc. etc…. this is just to start with. At the end, there is monitoring through Ruby vSphere console and vSphere web client. All this with theoretical teaching about VSAN components and terminology.

Interesting to see the impact of number of disk groups per server on the memory. Single disk group requires 6Gb of RAM whether 5 disk groups with 7 magnetic disks each requires 32Gb of RAM. I could see it during my testing of VSAN in my lab where I had only single disk group on my whitebox with 24 Gb of RAM. The memory consumption was higher than without activating VSAN and so as a result I could run lower number of VMs on that host. This was of course unsupported lab hardware with small amount of memory. But when architecting VSAN solutions it's certainly a parameter to take into account.

VMware Virtual SAN Diagnostics and Troubleshooting Reference Manual

So let's see what's inside…

First of all, I think that this is a real book. But you get this for free. Nice. I think that this guide is very exhaustive and goes really deep to allows VMware admins, architects and IT professional to master VMware VSAN. The book has

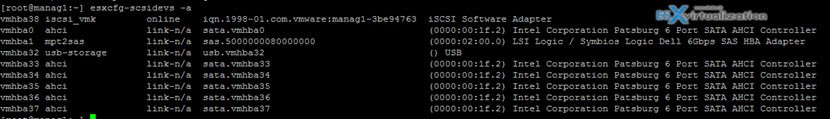

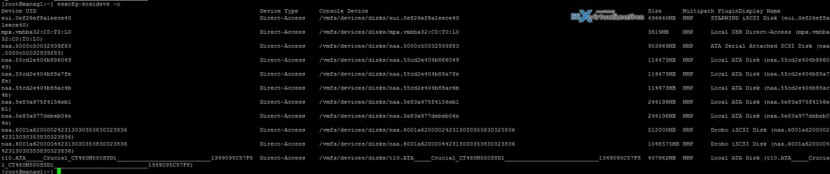

Different commands that helps identify disk types, storage controllers and also details about all logical devices

Example:

esxcfg-scsidevs -a

gives you simple view of the hbas and types of attachments with some details

But this one:

esxcfg-scsidevs -c

gives you further details..

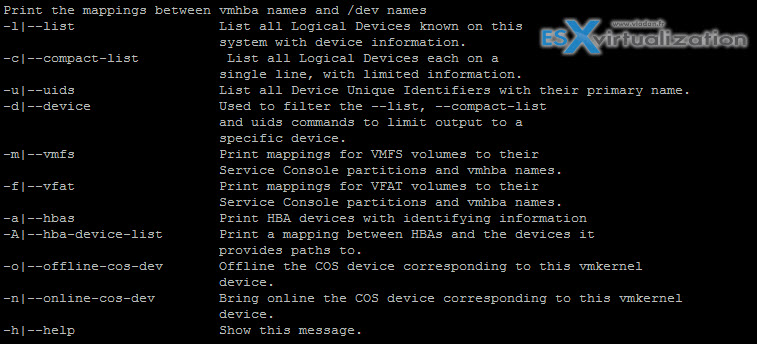

More options – get help with “?” at the end. Ex:

esxcfg-scsidevs -?

gives you this

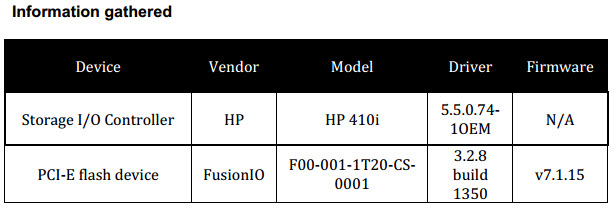

But let's get back to the paper as there is more commands to gather informations from the underlying hardware, driver versions and firmware versions. The document is a must have to be able to gather all those informations.

As an example you can see the informations gathered in the document.

This shows clearly the importance of VMware HCL for VSAN, but can be used as a guideline for other hardware. Cormac shows also example of unsupported hardware as he walks you through the research on VMware HCL site (with screenshots) to finally show that the particular storage controller isn't VSAN supported.

But this document do not focus for hardware only. It also teaches the general VSAN terminology. What are the different components of VSAN like replica, or witness and their impact on availability of VMs. Also he points the differences between the VSAN 5.5 and VSAN 6.0, because there are some things that changed. For example:

Quote:

In Virtual SAN 5.5, for a virtual machine deployed on a Virtual SAN datastore to remain available, greater than 50% of the components that make up a virtual machine’s storage object must be accessible. If less than 50% of the components of an object are accessible across all the nodes in a Virtual SAN cluster, that object will no longer be accessible on a Virtual SAN datastore, impacting the availability of the virtual machine. Witnesses play an import role in ensuring that more than 50% of the components of an object remain available.

Important: This behaviour changed significantly in Virtual SAN 6.0. In this release, a new way of handling quorum is introduced. There is no longer a reliance on “more than 50% of components”. Instead, each component has a number of “votes”, which may be one or more. To achieve quorum in Virtual SAN 6.0, “more than 50 percent of votes” is needed.

Info about failures:

Interesting read as well where you'll see the difference between Absent component or Degraded component. Where Absent one will trigger 60 min wait time (to see if it'll come back) the degraded component triggers remediation immediately.

What happens when cache tier SSD fails?

It depends. If you're configure NumberOfFailureToTolerate=1 than you're ok for the rebuild, but if you're on NumberOfFailureToTolerate=0 then:

If the VM Storage Policy has NumberOfFailuresToTolerate=0, the VMDK will be inaccessible if one of the VMDK components (think one component of a stripe) exists on disk group whom the pulled SSD belongs to. There is no option to recover the VMDK. This may require a restore of the VM from a known good backup.

What happens when a disk fails?

Same thing as for above…

It's well written deep dive manual for VSAN. If you're IT admin, VMware architect you'll need both documents! This document seems to go much deeper on the technical site however and shows pretty much every single aspect on SAN troubleshooting.

Get this document from this link: ![]() Diagnostics and troubleshooting Reference Manual – Virtual SAN

Diagnostics and troubleshooting Reference Manual – Virtual SAN

Great Article Vladan ! THX