Ever since introducing vSphere 7 U2 that vSphere vMotion performance is more optimized out of the box. In this post, we'll highlight some of the details that you might be or not, aware of. As you know, the performance of vMotion increases as you add more network bandwidth to your hosts. You basically add more physical NICs to your ESXi hosts.

You can add more 10Gb/s or faster vMotion NICs for maximum vMotion performance. VMware recommends that all vMotion vmknics on a host should share a single vSwitch. Each vmknicʹs portgroup should be configured to use a different physical NIC as its active vmnic. In addition, all vMotion vmknics should be on the same vMotion network.

Previous releases of vSphere have had some manual tweaks to fully leverage the network bandwidth on NICs faster than 10Gb/s, however, this manual configuration is no longer necessary if you're using vSphere 7 U2 or higher.

VMware has previously recommended configuring three vMotion vmknics on networks faster than 10Gb/s in order to achieve a full line rate for vMotion operations. However, since vSphere 7.0 Update 2, this is no longer necessary. Now, the vMotion process automatically creates the number of streams needed in order to fully utilize the bandwidth of these faster physical NICs.

Automatic adjustment for High Bandwidth

With vSphere 7 Update 2 there is a new mechanism. Within the process, the vMotion automatically “opens” the number of streams needed for the bandwidth of the physical NICs used for the vMotion network(s).

VMkernel interfaces that you have enabled for vMotion are checked as well as the underlying physical NIC's bandwidths. Depending on the results, vSphere calculates a number of streams that will be initiated.

As they say in the article,

“The baseline is 1 vMotion stream per 15 GbE of bandwidth”

Number of streams per VMkernel interface;

- 25 GbE = 2 vMotion streams

- 40 GbE = 3 vMotion streams

- 100 GbE = 7 vMotion streams

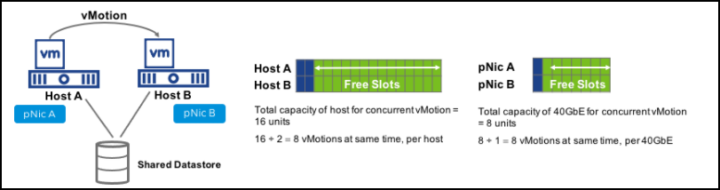

How about concurrent vMotion Operations?

However, this automatic optimization does not influence the limits to the number of concurrent vMotion operations. Those stay the same (but can be overridden through advanced settings). VMware do not recommend changing those settings.

Quote:

vCenter Server limits the concurrent vMotions per host to ensure resource balance and get the best overall performance. These limits are chosen carefully after extensive experimentation, and hence we strongly discourage changing the limits to increase concurrency.

There is a good post from VMware called Center Limits for Concurrent vMotion. You can have a look here for the details.

You can learn about the latest performance settings in the newest PDF from VMware called “Performance Best Practices for VMware vSphere 7.0, Update 3“.

Quote from the paper:

While a vMotion operation is in progress, ESXi opportunistically reserves CPU resources on both the source and destination hosts in order to ensure the ability to fully utilize the network bandwidth. ESXi will attempt to use the full available network bandwidth regardless of the number of vMotion operations being performed. The amount of CPU reservation thus depends on the number of vMotion NICs and their speeds; 10% of a processor core for each 1Gb/s network interface, 100% of a processor core for each 10Gb/s network interface, and a minimum total reservation of 30% of a processor core. Therefore leaving some unreserved CPU capacity in a cluster can help ensure that vMotion tasks get the resources required in order to fully utilize available network bandwidth.

Source: VMware Performance Best Practices for VMware vSphere 7.0, Update 3 PDF.

More posts from ESX Virtualization:

- VMware vCenter Converter Discontinued – what’s your options?

- How to upgrade VMware VCSA 7 Offline via patch ISO

- vSphere 7.0 U3C Released

- vSphere 7.0 Page [All details about vSphere and related products here]

- VMware vSphere 7.0 Announced – vCenter Server Details

- VMware vSphere 7.0 DRS Improvements – What's New

- How to Patch vCenter Server Appliance (VCSA) – [Guide]

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)