Not all workloads are equal and not all workloads needs the best in class and the fastest (and most expensive) SSD flash drives. Saber 1000 positions itself in the entry level enterprise segment for read intensive applications. The endurance of the drive is guaranteed during 5 years and 0.4 DWPD (Drive writes per day).

While it might seems a little, as being said the destination of the drive for read intensive applications still allows guarantee to write 384Gb per day, during 5 years. (in case of utilization of 960Gb model of Saber 1000). Shall the environment you have need more? That might be a question.

Note: This review was sponsored by OCZ Storage Solutions, a Toshiba Group Company.

The transformation from spinning media at the enterprise level is now. Newly designed server rooms and datacenters benefit from Flash which is many times faster than spinning media providing sub-millisecond access times and generates less heat. Only advantages. Where admins needs to be assured is usually the guarantee of the silicon. That's why vendors has introduced the DWPD and number of years of warranty allowing to calculate the period that the drive shall perform without issue. It is important to be sure your server is not going to destroy that SSD with writes after a few months…

The transformation from spinning media at the enterprise level is now. Newly designed server rooms and datacenters benefit from Flash which is many times faster than spinning media providing sub-millisecond access times and generates less heat. Only advantages. Where admins needs to be assured is usually the guarantee of the silicon. That's why vendors has introduced the DWPD and number of years of warranty allowing to calculate the period that the drive shall perform without issue. It is important to be sure your server is not going to destroy that SSD with writes after a few months…

It means that it shall be possible to monitor your workloads before you start looking for the right type of SSD. If you're using Windows File server for example, this can be done with a Microsoft built-in tool Windows Performance monitor which provides different disk metrics like %Disk Time, Avg. Disk Queue Length, Disk Transfers/sec or Disk Bytes/sec.

Saber is certainly not a good candidate for caching. For example caching in VMware VSAN architecture. But Saber 1000 shall be a good fit as a capacity tier in All-Flash VSAN architecture where reads takes place more often than writes as the de-staging the “hot data” to the capacity ties happens only when hot data bits became cold. The caching layer would be probably assured by other types of high endurance SSDs, for example SSDs based on PCI-e like OCZ Z-Drive 4500 cards.

One could also possibly imagine to run other types of hyper-converged solutions, like for example the one from Atlantis computing called Atlantis USX which can leverage any type of local storage (hdd, ssd…) and turn it into a pooled datastore with additional dataservices on the top allowing to save space (online deduplication and compression).

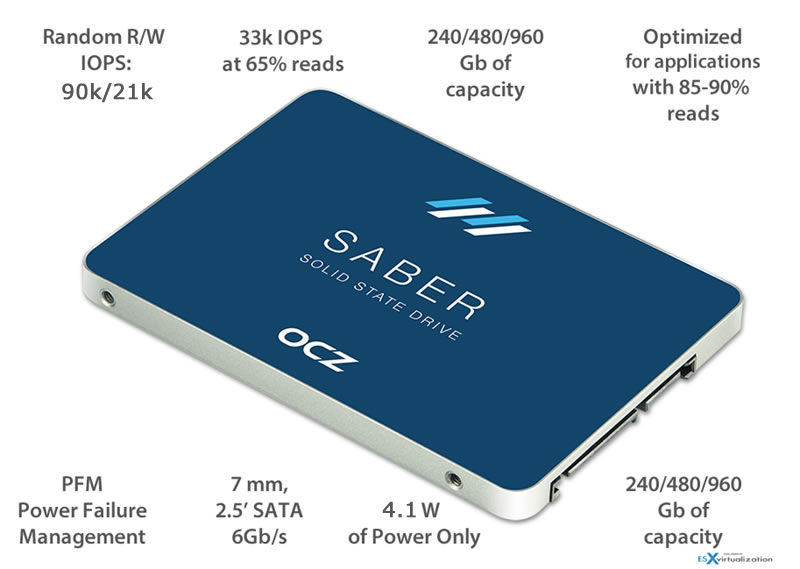

Saber 1000 Features

- Leverages OCZ’s Barefoot 3 controller, in-house firmware, and next generation A19nm NAND from Toshiba

- Delivers sustained performance and consistent I/O responses for high-volume deployment enterprise SATA SSDs

- Features capacities up to 960GB

- Supports Power Failure Management Plus (PFM+) to protect against unexpected power loss events

- Energy savings resulting from low 4.1W operating power (4.1 in active state, 0.6w idle = worst case scenarios)

- 2.5-inch, 7mm enclosure footprint

- Backed by a 5-year warranty with enterprise support

In case of power failure, Saber can also leverage a Power Failure Management Plus (PFM+), which delays the SSD circuitry long enough to ensure each SSD’s integrity so that it can be fully operational again when power has been restored. PFM+ is different than normal PFM as it has been engineered with extra physical capacitance inside the Saber, so when an unsafe power loss happens the drive has more time to safely power off.

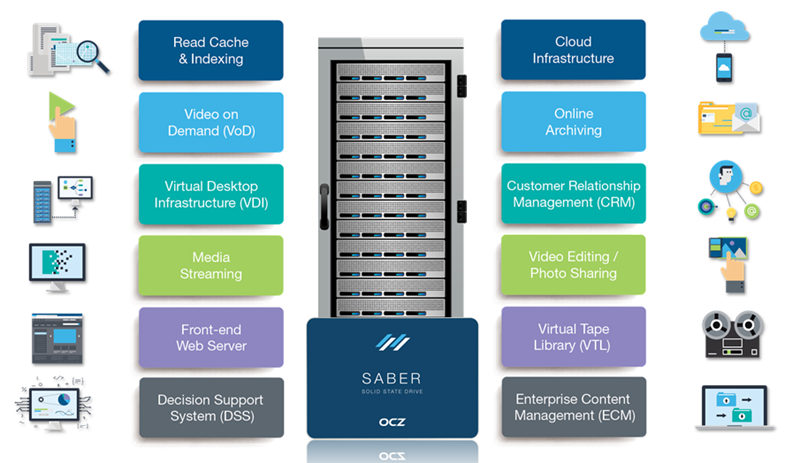

A picture of possible use cases of SABER 1000:

The Test Setup

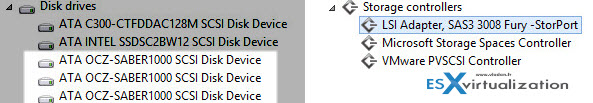

One of our newer systems based on Supermicro Single CPU Board – X10SRH-CLN4F which has LSI3008 HBA (12Gbp SAS connectivity) which I did a pass-through for the purpose of this test. I also build new VM with one Saber 1000 attached as Data disk and another one attached without partition, to be able to run few tests with IOmeter as IOmeter requires drive without partition.

Here is the setup showing the VMs hardware details. As you can see the storage controller is directly passed through to the VM and appearing in the hardware list, as well as all the disks that are attached to this card.

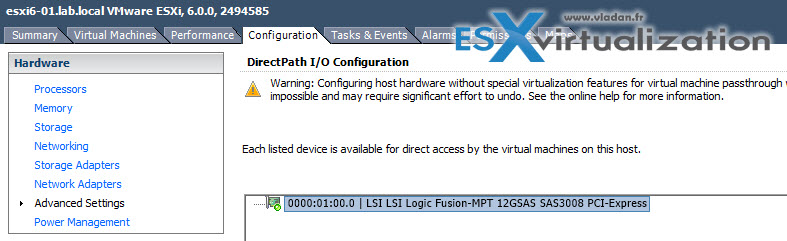

The LSI 3008 is 12Gbs SAS card (third generation SAS). The card is on VMware HCL, and also on VSAN HCL…. The screenshot bellow shows the configuration of a pass-through via vSphere client. I have configured the pass-through in order to be able to see this card inside my VM, without passing through the virtualization layer.

Saber 1000 SATA III drive supports capacities up to 960 Gb, the 19nm NAND flash from Toshiba is coupled with OCZ’s own Barefoot 3 controller.

In a steady state condition by which the Saber 1000 Series is writing, erasing and re-writing data repeatedly over its full capacity, Toshiba states the following specifications for their 240 Gb model:

- 550 MB/s for sequential reads (128KB blocks)

- 500 MB/s for sequential writes (128KB blocks)

- 90,000 IOPS for random reads (4KB blocks)

- 21,000 IOPS for random writes (4KB blocks)

Enterprise SSDs are generally measured at steady state under a full workload for seven days a week for 24 hours per day operation. The constant usage requires a substantially different design, fewer errors, higher general performance and greater data integrity.

Typical read intensive applications would be:

- Indexing

- Front-end Web Servers

- Media Streaming

- Virtual Tape Libraries (VTL)

- Video on Demand (VOD)

- Cloud Service Provider

- File-Servers: 80% read/20% write (f.ex. Storage/File-Server (Archive) writing data once for further reading operations)

- Database-Server: 67% read / 33% write

- Webserver: 100% read

Applications mentioned above could be tested with IOmeter mixed workload benchmark.

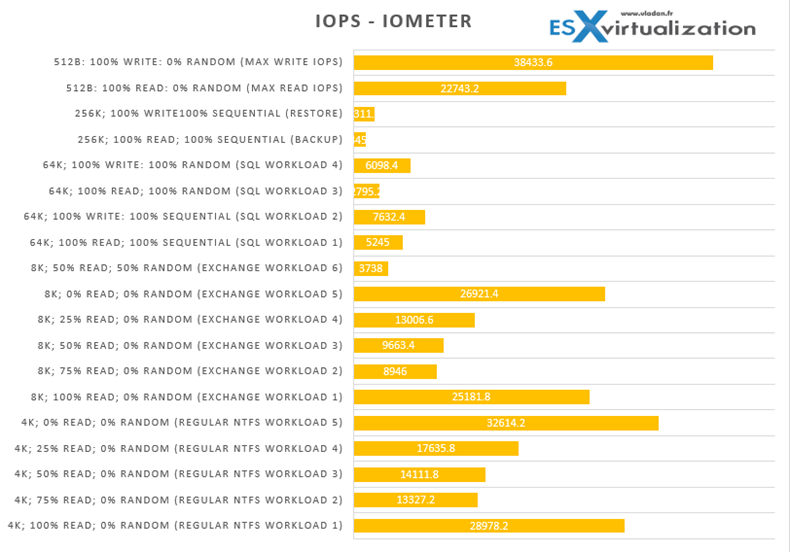

The testing model was based on capacity of 240 Gb. My tests were based with IOMeter, HDTune Plus (trial). In fact there is a Open IOmeter benchmark file available through VMware community hosted here, that provides most common workloads like regular NTFS or Exchange, SQL based workloads. The tests specs shows the workloads settings. They did run in the lab during over 24h (each test) and I have performed 7 iterations from which I calculated an average ratios.

4K; 100% Read; 0% random (Regular NTFS Workload 1)

4K; 75% Read; 0% random (Regular NTFS Workload 2)

4K; 50% Read; 0% random (Regular NTFS Workload 3)

4K; 25% Read; 0% random (Regular NTFS Workload 4)

4K; 0% Read; 0% random (Regular NTFS Workload 5)

8K; 100% Read; 0% random (Exchange Workload 1)

8K; 75% Read; 0% random (Exchange Workload 2)

8K; 50% Read; 0% random (Exchange Workload 3)

8K; 25% Read; 0% random (Exchange Workload 4)

8K; 0% Read; 0% random (Exchange Workload 5)

8K; 50% Read; 50% random (Exchange Workload 6)

64K; 100% Read; 100% sequential (SQL Workload 1)

64K; 100% Write: 100% sequential (SQL Workload 2)

64K; 100% Read; 100% Random (SQL Workload 3)

64K; 100% Write: 100% Random (SQL Workload 4)

256K; 100% Read; 100% sequential (Backup)

256K; 100% Write100% sequential (Restore)

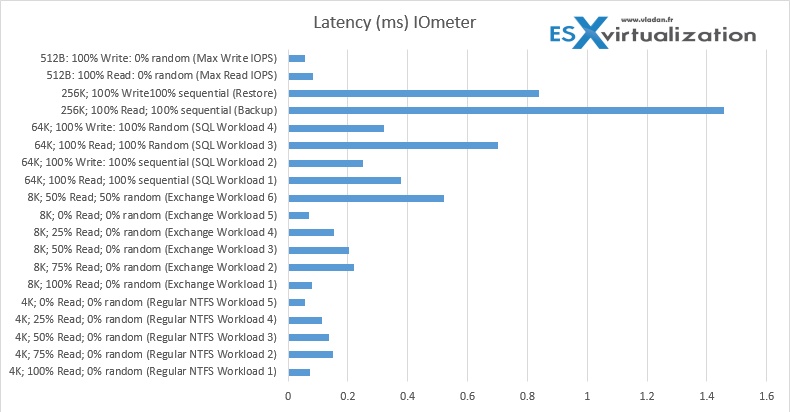

And here are the latency tests. As you can see, most of the time we have sub-ms latency on all workloads…

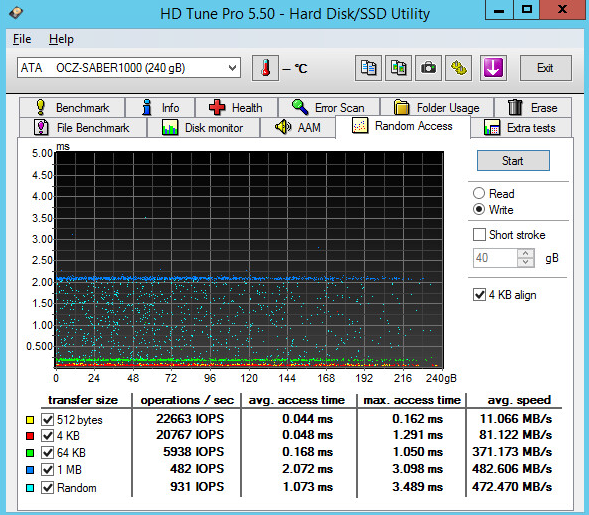

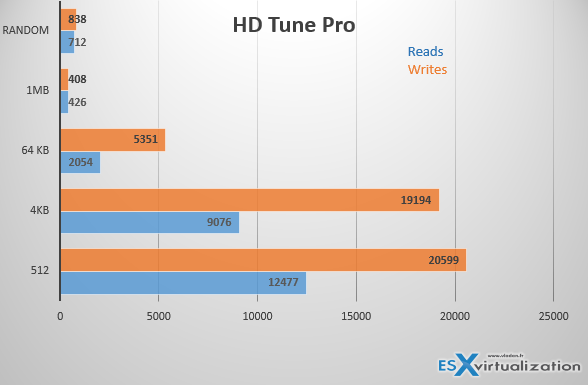

Other tests done with HD Tune Pro (trial edition)

The tests were done several times (10 times), then calculated an average.

You can find the complete tests in the graph below:

Note that those tests were conducted after the latest firmware update. The latest firmware tested was version 1.01 and this brought further updates to robustness, functional and compliance. Check here the release notes.

According to the SNIA the performance of an SSD normally already starts to degrade after the drive has been rewritten twice (ex. 240GB for a 120GB drive) we aimed to report on products which not only start fast but also stay fast. As other test either factor in read performance (which will not degrade, like PC Mark) or write small amounts of data (like 1GB for CDM) IOmeter is the only test which is able to give an indication of what performance will end up like.

Please note that this is of course not a workload that SSDs will encounter normally but it is made to see what a drive will end up like when used for several years. In order to do this test I had to make sure that no partitions are present on the drive to ensure you are testing the drive without NTFS caching which might be done via Windows, so the test is applicable for all platforms including MAC and Linux.

Warranty

Usually client drives are generally not validated on enterprise hosts, but Saber 1000 was specifically tested for interoperability in enterprise environments. This means that there is not only a warranty during 5 years, but also an Enterprise service and support team should it be necessary. Usual client products do not have that level of “Hands-on” support.

Wrap-Up

The Saber 1000 is an entry level enterprise drive targeting workloads where you need fast reads and some writes to be done. Ideally built for capacity tiers, but guarante of 0.4 Drive writes per day (384Gb writes per day for 960Gb model) offers more than enough security when it comes to writes. Would I prefer SAS spinning media or SATA SSD? I think the second one!