We continue to cover the VCP-DCV 2021 certification based on the vSphere 7 product. The previous release of our guide is still extremely valuable for those who is passing this certification, and it's based on the vSphere 6.7 product. You can check the detailed page here – VCP6.7-DCV Study Guide Page.

Let's get started. vSphere can use several types of storage and we'll see the details of what you need to know here.

Local and Networked storage – while local storage is pretty obvious (direct-attached disks or DAS), the networked storage can be different types, but most importantly, can be shared and accessed by multiple hosts simultaneously.

VMware supports virtualized shared storage, such as vSAN. vSAN transforms internal storage resources of your ESXi hosts into shared storage. ESXi supports SCSI, IDE, SATA, USB, SAS, flash, and NVMe devices. You cannot use IDE/ATA or USB to store your VMs.

vSphere and ESXi support network storage based on NFS 3 and 4.1 protocol for file-based storage. This type of storage is presented as a share to the host instead of block-level raw disks

The main problem with DAS is that only the server where the storage is physically installed can use it. Not any other machine within your cluster. That's why is far better to use shared storage with NFS, iSCSI or FC.

Fibre Channel (FC) storage – FC SAN is a specialized high-speed network that connects your hosts to high-performance storage devices. The network uses Fibre Channel protocol to transport SCSI traffic from virtual machines to the FC SAN devices. The host should have Fibre Channel host bus adapters (HBAs).

Download FREE Study VCP7-DCV Guide at Nakivo.

- The exam duration is 130 minutes

- The number of questions is 70

- The passing Score is 300

- Price = $250.00

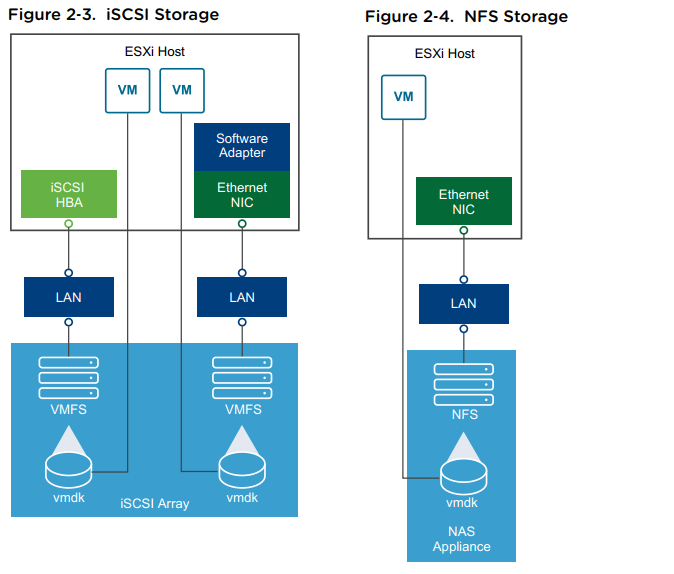

Internet SCSI (iSCSI) storage – Stores virtual machine files on remote iSCSI storage devices. iSCSI packages SCSI storage traffic into the TCP/IP protocol, so that it can travel through standard TCP/IP networks instead of the specialized FC network. With an iSCSI connection, your host serves as the initiator that communicates with a target, located in remote iSCSI storage systems.

Storage Device or LUN – the terms device and LUN are used interchangeably. Typically, both terms mean a storage volume that is presented to the host from a block storage system and is available for formatting

ESXi offers the following types of iSCSI connections:

- Hardware iSCSI – Your host connects to storage through a third-party adapter capable of offloading the iSCSI and network processing. Hardware adapters can be dependent and independent.

- Software iSCSI – Your host uses a software-based iSCSI initiator in the VMkernel to connect to storage. With this type of iSCSI connection, your host needs only a standard network adapter for network connectivity. You must configure iSCSI initiators for the host to access and display iSCSI storage devices

Shared Serial Attached SCSI (SAS) – Stores virtual machines on direct-attached SAS storage systems that offer shared access to multiple hosts. This type of access permits multiple hosts to access the same VMFS datastore on a LUN.

Network storage – this type of storage is usually based on dedicated enclosures that have controllers running usually Linux or other specialized OS on it. Now they're starting to be equipped with 10GbE NICs but this wasn't always the case. However, it allows multiple hosts within your environment to be connected directly to the storage and share this storage among those hosts.

VMware supports a new type of adapter known as iSER or ISCSI Extensions for RDMA. This allows ESXi to use RDMA protocol instead of TCP/IP to transport ISCSI commands and is much faster.

Few more types of storage types:

- VMware FileSystem (VMFS) datastores: All block-based storage must be first formatted with VMFS to transform a block service to a file and folder oriented services

- Network FileSystem (NFS) datastores: This is for NAS storage

- VVol: introduced in vSphere 6.0 and is a new paradigm to access SAN and NAS storage in a common way and by better integrating and consuming storage array capabilities. With Virtual Volumes, an individual virtual machine, not the datastore, becomes a unit of storage management. And storage hardware gains complete control over virtual disk content, layout, and management.

- vSAN datastore: If you are using a vSAN solution, all your local storage devices could be polled together in a single shared vSAN datastore. vSAN is a distributed layer of software that runs natively as a part of the hypervisor.

- Raw device Mapping – RDM is useful when a guest OS inside a VM requires direct access to a storage device.

VAAI – vSphere API for Array Integration – those APIs include several components. There are Hardware Acceleration APIs that help arrays to integrate with vsphere for offloading certain storage operations to an array. This reduces CPU overhead on a host.

vSphere API for Multipathing – This is known as Pluggable Storage Architecture (PSA), which uses APIs which allow storage partners to create and deliver multipathing and load-balancing plugins that are optimized for each array. Plugins talk to storage arrays and chose the best path selection strategy to increase IO performance and reliability.

Find other chapters on the main page of the guide – VCP7-DCV Study Guide – VCP-DCV 2021 Certification,

Thanks for reading and stay tuned for more…

More posts from ESX Virtualization:

- vSphere 7.0 Download Now Available

- vSphere 7.0 Page [All details about vSphere and related products here]

- VMware vSphere 7.0 Annonced – vCenter Server Details<%Fli>

- VMware vSphere 7.0 DRS Improvements – What's New

- How to Patch vCenter Server Appliance (VCSA) – [Guide]

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)