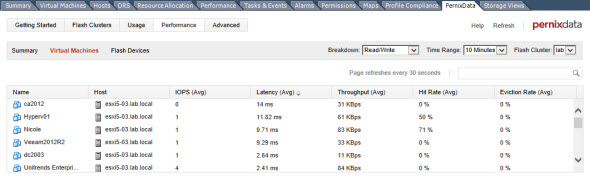

New features has been announced by PernixData during SFD5 event. If you don't know PernixData and their FVP technology you should check this post – PernixData FVP in my lab. In short, PernixData FVP Technology is using kernel module. It's not a VM acceleration appliance. You just have to install a local SSDs or PCIe flash card(s) in servers participating in your vSphere cluster and see some magic happens….

PernixData announced some exciting new features to their FVP software which until now was able to accelerate workloads by using server side flash (SSD) and this acceleration was “only” possible on block based storage. This is about to change.

Newly announced (not available yet) features from new release of PernixData FVP are:

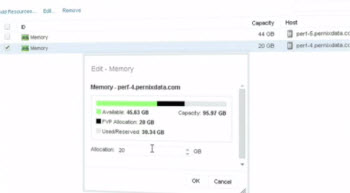

- FVP Clustering Using RAM – by using RAM as a media it will be possible to accelerate workloads where curently only locally installed SSDs were allowed. RAM as a fastest medium allows the fastest acceleration. Memory can be increased on decreased on-the-fly…

Quote from a PernixData Blog:

Quote from a PernixData Blog:

FVP integrates directly into the kernel, aggregating memory into a pool of acceleration resources that provides dynamic re-sizing without reboots or impact on VM operations.

- NFS Storage Support – in the version 1.0 only block storage was supported. Adding NFS support only makes sense to allow accelerate workloads based on NFS

Another interesting quote:

With full VMkernel integrated functionality, you can attach an NFS datastore without modifying or rebooting virtual machines, changing your hosts, or reconfiguring your storage medium (e.g. IP addresses, VLANs, mount pints, etc).

- Network Compression – compressing network traffic before it leaves for get in the wire. Who would have thought it's possible. By intelligently monitoring the workload of the VM in real time, it's possible to compress network traffic at will and on demand. With compression activated there will be less data to replicate to the other side.

- Topology Aware FVP via replica groups – possibility to store replica data at preferred location allowing planning for DR scenarios or maximize the performance. It will be possible to assign host to replica group. Automatically, FVP will choose another host for replication which will be receiving write data. If there is a network failure or host failure, FVP automatically assign another host from the same replica group. This host will be the new replica host.

It's quite magical. Isn't it? You should definitely check out the demo video where Satyam Vaghani is showing the technology in action.

- NFS Support

- Network Compression

- Distributed Fault Tolerant Memory with possibility to increase or decrease

- Topology Awareness

Enjoy….

There are other videos from the day at PernixData website here.

Update: I took a photo with Satyam Vaghani, one of the founders of PernixData, during VMworld Barcelona 2014. PernixData is now GA. You can check the post here.

You should also check vmpete.com blog where a Testing InfiniBand in the home lab with PernixData FVP was done by Pete Koehler. Cool stuff… He was using Infiniband network in his lab !!!

I'll certainly write more about this technology when I'll get hands on it…-:)

Source: PernixData Blog

Thank you Vladan for your kind reference to my blog. Much appreciated. Keep up the great work.

– Pete