VMware vSAN as an Hyperconverged infrastructure solution (HCI) has some basic building blocks. One of them is a vSAN Disk group which was detailed in one of our previous posts. As this technology is still very young and many folks seek an information, I thought that it is a good idea to explain this. So today, we'll further develop vSAN disk group capability and we'll focus and answer some question on What is VMware vSAN Caching Tier?

Each host part of a vSAN cluster participates with local storage ( or direct attached storage (DAS) if you like) and this storage is pooled together to form a single datastore visible to all the hosts within the cluster. The datastore is called vSAN datastore.

The limit of a vSAN cluster is 64 hosts which is also a limit of VMware cluster in general. Each node (each host) is connected to the vSAN datastore, which is spanned across the whole cluster. vSAN provides storage to running VMs but can be configured to provide iSCSI to the outside world, to other servers not part of the cluster.

vSAN caching is one of the key elements of the infrastructure and usage of highly endurant SSD devices, which are part of vSAN HCL, are recommended. Those devices are absorbing all reads and writes from the workloads running within the infrastructure to deliver an excellent performance which increases linear way when new nodes are added to the cluster.

What is VMware vSAN Caching Tier?

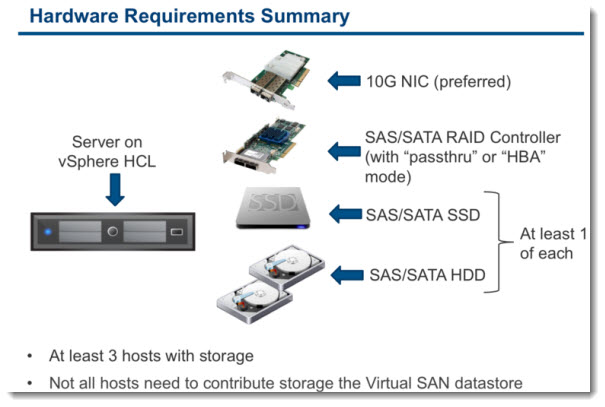

Each host participating in vSAN cluster, has one device which is used for caching tier, and one or more devices which are used for capacity tier (up to 7 disks/SSDs). The caching device, which can be SATA/SAS SSD or PCIe nvme device, but also other devices, such as Memory Channel Storage from Diablo Technologies.

vSAN caching tier is not a place where one can save anything. The bits are stored in the caching tier as the caching tier only has “hot” data. When the data becomes “cold”, they are de-staged and saved to the capacity tier.

And vice versa, when there is a cache miss, the datas are pulled to became hot again, and live in the cache tier. The size of the set varies and depends of the workloads which are running.

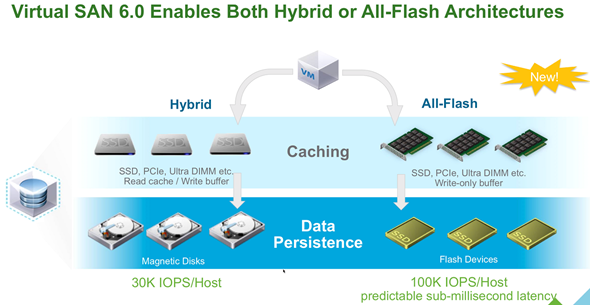

Read Cache – used only in vSAN hybrid, and keeps a collection of recently read disk blocks. If there is a cache hit, the latency is minimal as the block can be fetched from SSD instead from disk.

Write Cache – used on hybrid and also on All-Flash configurations. It's used as a write buffer. It improves performance. The cache is also copied elsewhere within the cluster so there is at least one additional copy of the VMs data.

Interesting fact:

Once a write is initiated by the application running inside of the Guest OS, the write is duplicated to the write cache on the hosts which contain replica copies of the storage objects. This means that in the event of a host failure, we also have a copy of the in-cache data and no data loss will happen to the data; the virtual machine will simply reuse the replicated copy of the cache as well as the replicated capacity data.

Client Cache – (It's in since vSAN 6.2) – The role of client cache is following. It uses DRAM memory local to the VM to accelerate read performance. It's about 4% per host (up to 1Gb). The RAM is closer to the VM so it accelerates the reads avoiding going to fetch the data over the network to another caching device.

Client cache works in complement to CRBC (known for VDI) and will cache not only the read-only replica but others VMDK's too.

Other vSAN articles:

- VMware vSAN 6.5 Licensing PDF

- What Is Erasure Coding?

- What is VMware vSAN Affinity for Stretched Clusters

- What is VMware Hyper-Converged Infrastructure?

VMware recently updated the caching guidelines and while the 1:10 ratio for cache/capacity is still recommended for hybrid vSAN, they recommend to size the cache tier for All-Flash vSAN with more large devices.

Since vSAN 6.0, if the flash device used for the caching layer in all-flash configurations is less than 600GB, then 100% of the flash device is used for cache. However, if the flash cache device is larger than 600GB, then only 600GB is used in caching. This is a per-disk group basis.

How the exceeded capacity used?

VSAN cycles through All cells in a cache SSD so any excess capacity (greater than recommended size) is used for endurance and helps in extending the life of the drive.

An example of sizing:

Note that the detailed and complete example can be found at storagehub.vmware.com. Below, it's just an overview.

400 VMs 100Gb disk each. Hybrid vSAN environment. The customer requires 100 cores overall. The customer has sourced servers that contain 12 cores per socket, and a dual socket system provides 24 cores. That gives a total of 120 cores across the 5-node cluster. This is more that enough for our 110 core requirement.

- Total Storage Requirements (without FTT): *

- (400 x 100GB) + (400 x 200GB)

- 40TB + 80TB

- = 120TB

- Raw Storage Requirements (with FTT): *

- = 120TB x 2

- = 240TB

- Raw Storage Requirements (with FTT) + VM Swap (with FTT): *

- = (120TB + 4.8TB) *2

- = 240TB + 9.6TB

- = 249.6TB

- Raw Storage Consumption (without FTT) for cache sizing:

- (75% of total raw storage)

- = 75% of 120TB

- = 90TB

- Cache Required (10% of Estimated Storage Consumption): 9TB

- Estimated Snapshot Storage Consumption: 2 snapshots per VM

- It is estimated that both of snapshot images will never grow larger than 5% of base VMDK

- Storage Requirements (with FTT) = 240TB

- There is no requirement to capture virtual machine memory when a snapshot is taken

- Estimated Snapshot Requirements (with FTT) = 5% = 12TB

- Raw Storage Requirements (VMs + Snapshots):

- = 249.6TB + 12TB

- = 261.6TB

Wrap Up:

vSAN caching tier should be sized for using about 10% of the storage capacity before the NumberOfFailuresToTolerate (FTT) VM storage policy is applied. One should use VM's size, then consider the RTT used, RAID1 or RAID 5/6 to properly pick the approximate 10% of the caching tier.

More posts from ESX Virtualization:

- VMware VSAN 6.5 – What's New?

- VMware vSAN 6.6 Announced (detailed)

- VMware vSAN 6.6 Nested Lab Deployment DEMO

- What is VMware vSAN Disk Group?

- What is VMware Enhanced vMotion Compatibility (EVC)

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)

Nice compiled post, now concepts are much cleared about vSAN cache…

Thank you for this and the Disk group write up. Very easy to consume!

So how does it handle read cache in the cache tier? In the Write tier it has mirror of the FTT-1 but does the read space double also or is that just local most recent reads from that individual host.