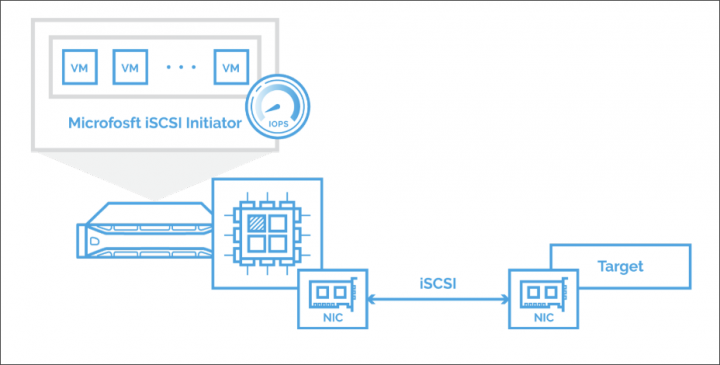

iSCSI technology has over 20 years. It's been designed for 1-2 CPU cores systems and did not evolve much. Modern CPUs with multi-core architecture does not make efficient use. As such, Microsoft iSCSI initiator in its default configuration isn't very efficient as it does not uses all CPU cores to process the load. StarWind comes up with a free solution called StarWind iSCSI Accelerator allowing you to quickly optimize and get maximum of IOPS for applications by optimizing and tuning the Microsoft iSCSI initiator to distribute the load across all CPU cores.

It is not a full-blown product, rather a load balancer using driver and script which needs to be installed on a “client” system where is the iSCSI initiator. The installation and start/stop actions are done manually via bat files provided by StarWind.

The script balances the load evenly across all CPU cores to optimize the load.

Please note that this is a Technical Preview version, so you might want to test it before running production workloads.

Quote from Starwind:

StarWind iSCSI Accelerator fixes the MS iSCSI Initiator performance bottleneck by automatically distributing the iSCSI workload among all available CPU cores. As a result, users can easily squeeze maximum IOPS for their applications without wasting time on Microsoft iSCSI Initiator tuning.

StarWind iSCSI Accelerator – The steps to deploy and run

1. Execute the script prepare_test_machine.cmd

It will install a test certificate, switch the machine to test mode and disable integrity checks to allow using self-signed certificates on drivers. This operation requires machine to be rebooted, so the script initiates reboot operation.

NOTE: If this step fails, check if Secure Boot is disabled on the server.

2. Execute the script install_lb.cmd

It will install the iSCSI Accelerator driver.

3. Use start_lb.cmd and stop_lb.cmd scripts to run and stop iSCSI Accelerator driver.

When the driver is started, it automatically processes all new iSCSI sessions to distribute CPU load evenly.

Advantages

- Fast Deployment – You save your time to trying to fine-tune Microsoft iSCSI initiator for better performance. You'll be able to deploy the accelerator in less than a couple of mins.

- Free of charge – Yes, it's a free tool, and you don't need any additional hardware for that.

- Speed – You'll be able to accelerate Hyper-V VMs and Windows server applications by distributing iSCSI workloads by using all available CPU cores instead of just one or two.

Links and Downloads:

- StarWind iSCSI Accelerator (Download and PDF)

More about StarWind and storage

StarWind continues to innovate. The company has been around for many years and iSCSI and storage is their specialty. However, recently they're moving forward with another interesting technology called NVME Over Fabric (NVMe-Of).

NVMe-over-Fabric is a newer standard that maps NVMe to RDMA in order to allow remote access to storage devices over an RDMA fabric using the same NVMe language. NVMe has larger queue depth than SCSI (64000 over 254 for SAS, or 31 for SATA).

StarWind support for NVMe over Fabrics (NVMe-oF) – the protocol tailored to squeeze maximum NVMe performance at minimum latency and CPU utilization. This protocol enables to build almost 8 times more efficient NVMe storage than if you use any SCSI-derived protocol.

You might want to read more about it here:

How To Create NVMe-Of Target With StarWind VSAN

That's it for today. I hope you had a good read.

More posts about StarWind on ESX Virtualization:

- StarWind Storage Gateway for Wasabi Released

- How To Create NVMe-Of Target With StarWind VSAN

- Veeam 3-2-1 Backup Rule Now With Starwind VTL

- StarWind and Highly Available NFS

- StarWind VVOLS Support and details of integration with VMware vSphere

- StarWind VSAN on 3 ESXi Nodes detailed setup

- VMware VSAN Ready Nodes in StarWind HyperConverged Appliance

More posts from ESX Virtualization:

- How to Patch vCenter Server Appliance (VCSA) – [Guide]

- VCP6.7-DCV Objective 4.2 – Create and configure vSphere objects

- VCP6.5-DCV Objective 1 – Configure and Administer Role-based Access Control

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

- VMware Certification Changes in 2019

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)