To be honest, this did not come to my mind until I realized about bottlenecks shifts in storage. Back in a day when we were using spinning media, the only way we could improve IOPS was to change RAID type. For example from RAID 5 to RAID 10. This would obviously result in wasting much more disk space, but the performance was better. We had the SSD revolution with SATA/SAS devices which were using still the same RAID cards and the performance has increased tremendously, but with NVMe we have a slight problem.

Now, NVMe SSD can deliver around one million IOPS per single card. If you want to try to handle this via traditional RAID config, you'll create a huge bottleneck. A single NVMe SSD can have super performance but you can't really put them in RAID with traditional RAID systems that are limited to a few disks maximum. If you want to use software RAID instead, you'll lose performance too.

There is a solution from GRAID which has their GRAID Technology SupremeRAID™ SR-1000 cards using graphical GPU in its cards! They received Innovation Award during CES 2022 this year. It's connected via x16 PCIe Gen 3.0 interface and is able to support RAID 0,1,5,6, or 10. It can handle up to 32 physical drives. It's exactly this device that is used in StarWind's Backup Appliance (check out the updated spec technical PDF here) using the fastest NVMe RAID data protection.

What's the actual performance then?

StarWind has also tested performance on such a system and used their latest StarWind Backup Appliance, in conjunction with Veeam Backup and Replication. There is a full blog post with the setup, benchmarking methodology and details, but I'd like to highlight a few things here.

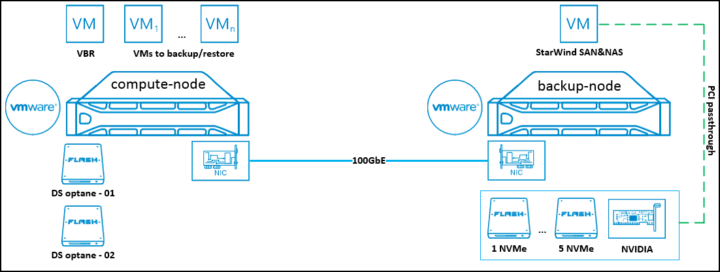

They're using PCI passthrough for the disk attachment to the StarWind VM.

Quote:

The VM with StarWind SAN&NAS, containing 5 NVMe (Intel Optane SSD DC P5800X) and GPU NVIDIA Quadro T1000, has been transferred to the backup-node. Thanks to GRAID NVMe, these were arranged into the RAID5 array, which will serve as the backup repository. Furthermore, 2 VMFS datastores were built over 2 NVMe (Intel Optane SSD DC P5800X) on the compute-node to contain VMs for backup/restore operations.

There are 3 types of different scenarios so presenting one of them only would not be optimal here. The best would be for you to head and read the detailed post at Starwind blog here.

The Supreme RAID card is processing the IOs directly so the CPU of the server isn't used for that. The card is GPU-based (Nvidia) so the solution is very powerful compared to traditional RAID cards. The GRAID cards apparently use an AI engine, but we don't have much more information as it's probably secret.

Quote from Graidtech:

Our disruptive software plus hardware solution unlocks the traditional performance bottleneck of RAID protection for SSDs. It's also the world’s first NVMeoF RAID solution that not only protects direct-attached SSDs but also those connected via NVMe over Fabrics (NVMeoF).

Source:

More posts about StarWind on ESX Virtualization:

- Free StarWind iSCSI accelerator download

- VMware vSphere and HyperConverged 2-Node Scenario from StarWind – Step By Step(Opens in a new browser tab)

- StarWind Storage Gateway for Wasabi Released

- How To Create NVMe-Of Target With StarWind VSAN

- Veeam 3-2-1 Backup Rule Now With Starwind VTL

- StarWind and Highly Available NFS

- StarWind VVOLS Support and details of integration with VMware vSphere

- StarWind VSAN on 3 ESXi Nodes detailed setup

- VMware VSAN Ready Nodes in StarWind HyperConverged Appliance

More posts from ESX Virtualization:

- VMware vCenter Converter Discontinued – what’s your options?

- How to upgrade VMware VCSA 7 Offline via patch ISO

- vSphere 7.0 U3C Released

- vSphere 7.0 Page [All details about vSphere and related products here]

- VMware vSphere 7.0 Announced – vCenter Server Details

- VMware vSphere 7.0 DRS Improvements – What's New

- How to Patch vCenter Server Appliance (VCSA) – [Guide]

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)

“There is a , but I’d like to highlight a few things here.”

Looks like a word is missing.