Being part of a vExpert program has been an awesome journey for me since the beginning. Since when I started blogging, the VMware vExpert award was something that makes me proud, pushes me forward in my career and also brings some goodies. As a vExpert I can express my thanks to @Intel and @vExpert program for the opportunity to test some unique technology with Intel Optane drives.

As you might know, Intel Optane technology is built on a 3D XPoint architecture, which allows for faster data access and transfer speeds, higher endurance, and lower power consumption compared to traditional NAND flash memory.

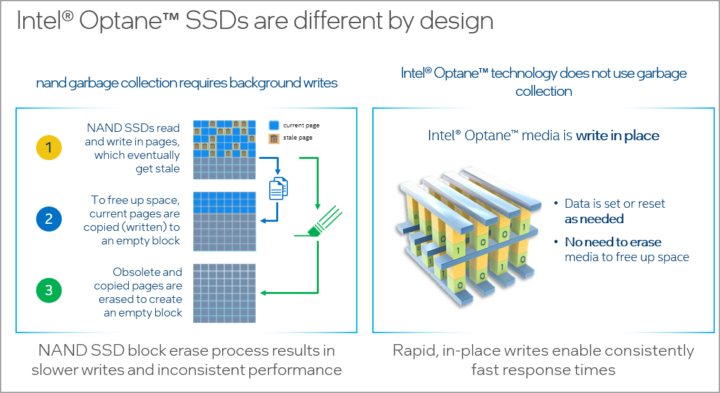

Optane SSDs are high-performance solid-state drives that use Optane technology to deliver faster data access and transfer speeds than traditional NAND based SSDs. The technology is different from traditional NAND based design where needs things like garbage collector and to free up space they need the current pages to be copied to an empty blocks.

The Optane technology has write in place where the data set or reset is not necessary, no need to erase to free up space, so faster writes, faster response times, more efficient, more durable.

Two main formats

There are PCIe cards or there are as follows. The PCIe express card takes one slot while the U.2 disk when “hookup” to an adapter card

Nested Lab setup

During the time of writing, I'm using those drives in a commercial workstation, where I use the U2 format in order to accommodate maximum of those U2 format drives into my case. My motherboard simply can take a single PCIe slot. That's why, for now, I'm using the U.2 version that allows me to maximize the usage of the PCIe Slots.

Imagine you have a motherboard with 5 PCIe slots, with this solution, you could hook 20 Optane drives….

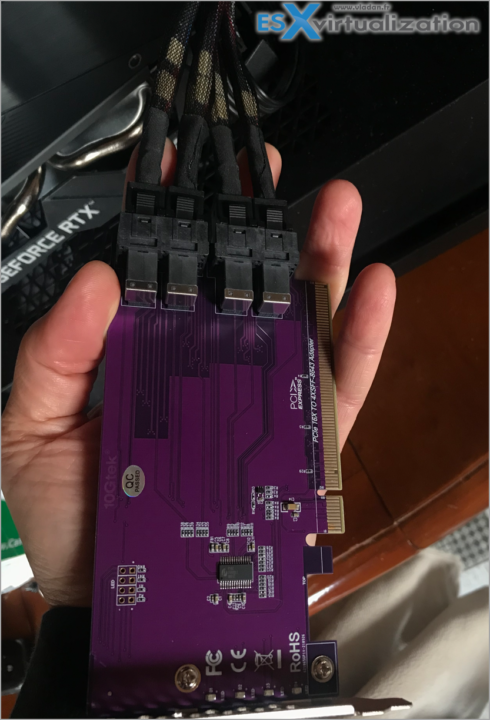

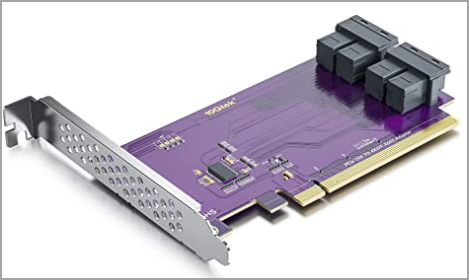

I have a PCIe card, an adapter that has four lanes. (It's a PCIe to SFF-8643 Adapter for U.2 SSD, X16, (4) SFF-8643. Support Windows 10/2016/2019, REHL/Cent0S 7/8, VMware ESXi 6/7, Ubuntu Linux 18/20/21, etc).

looks like this…

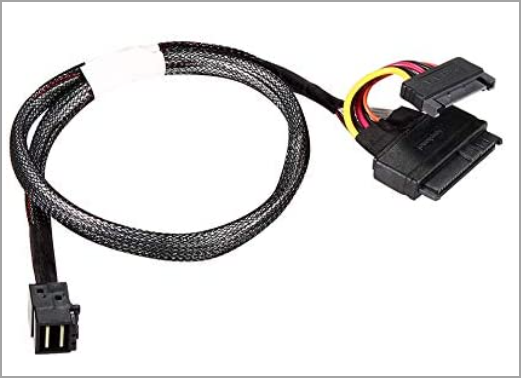

and cables… (It's a 50CM HD Mini-SAS(SFF-8643) to U.2 (SFF-8639) NVMe SSD Cable)

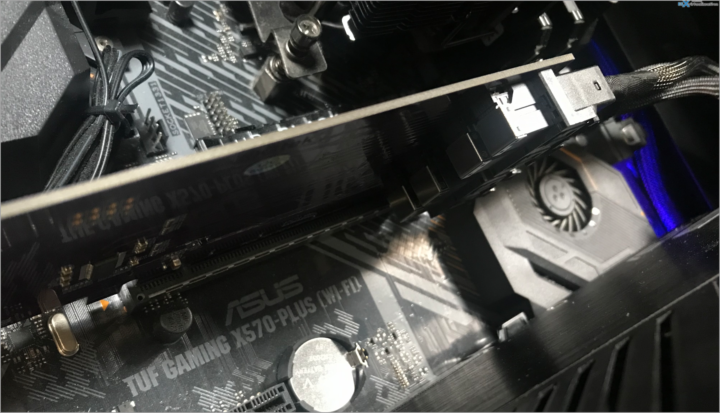

So I can attach 4 Optane U.2 format disks. Below is the view of the motherboard with the extension card plugged in.

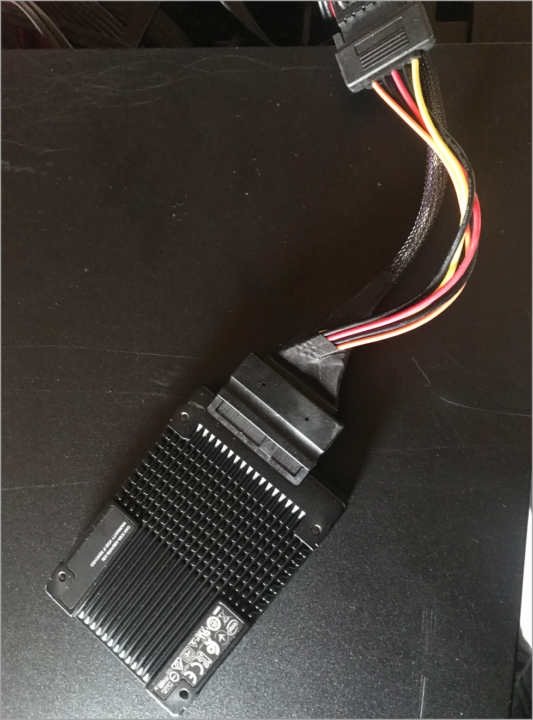

Then here, you can see the detail of the U2 drive. Note that not only you'll need to purchase a cable, but also make sure that you can have power supply for those…

Here is the cable, with the power cable….

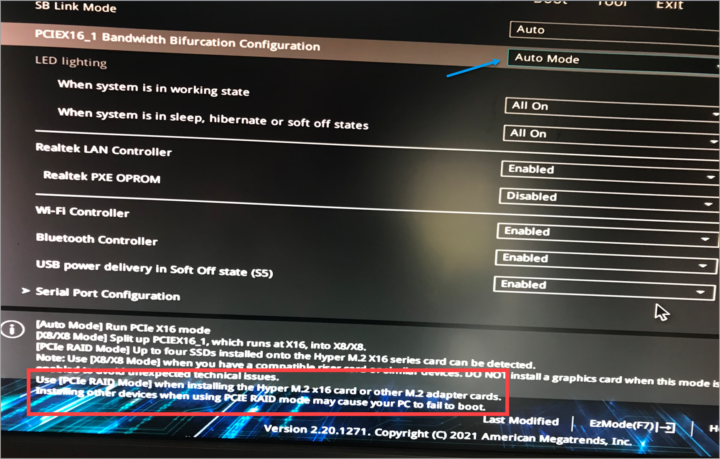

BIOS configuration

Yes, the PCIe slot has to be configured via BIOS so make sure that your motherboard allows that. There is a drop-down menu that you can choose different options. I have an Asus MB with Ryzen 7 CPU.

- ASUS motherboard X570 TUF Gaming X570-Plus (WI-FI) – AM4 – https://amzn.to/3hMckBJ

- AMD Ryzen 9 5950X 16-core, 32-Thread Unlocked Desktop Processor- https://amzn.to/3qTsJbP

There are 3 different modes:

- X16 – standard max speed

- X8/X8 – split mode into X8/X8

- RAID mode – for use when using RAID and M.2 adapter cards.

The config might change over time, so don't look at this as an ultimate lab, but rather as a lab that has evolved into this. When I'll will be chosing a new motherboard, I'll make sure to pick one with a maximum PCIe slots!!!

Nested Workloads only

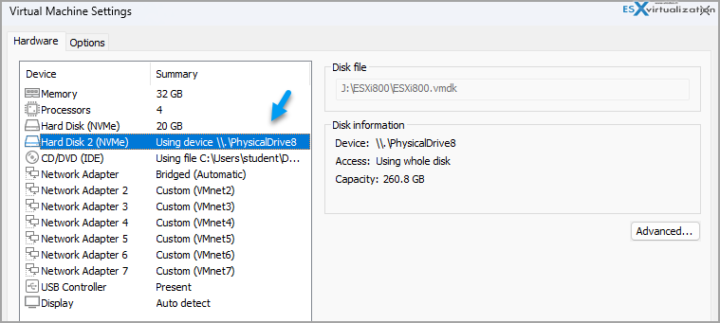

So basically I'm using the lab for spinning up VMs via VMware Workstation Software, where I installed a lates VMware VSAN 8.0 U1.

I'm passing through one disk to each ESXi within the VSAN cluster.

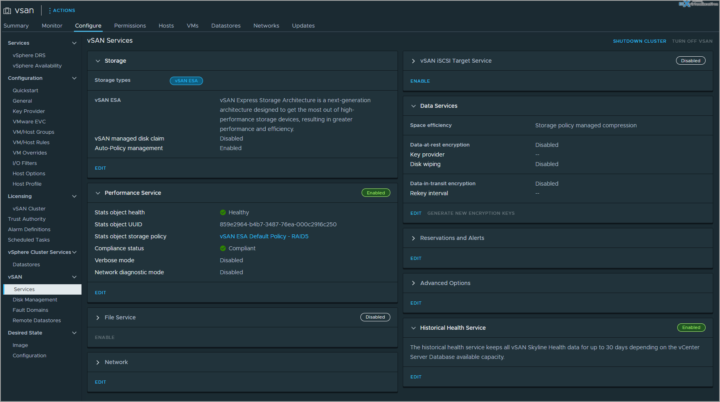

VSAN ESA Architecture is the new architecture introduced in vSAN 8.0. This is the UI …… The nested lab allows to test different configurations while keeping the main workstation as a working platform. In my case it's a blogging platform.

The capacity of the drive is only 260GB, but that's good enough for a lab -:).

Final Words

I did not go into a performance testing, but while working on a videos, I have the spare (the 4th disk, not used for VSAN cluster) configured as a scratch disk when rendering videos to have the fastest possible system. You can certainly imagine other usage scenarios for Intel Optane drives, such as applications that do a lot of writes per day, such as database systems, scientific applications or photography. Stay tuned for more……

While this setup might last some time, the idea is to evolve into something more pro, with 3-4 physical hosts, in the future. But for now, this solution stays compact, consumes a reasonable amount of power and is relatively quiet and cool so I can work next to it. If you haven't seen the tiny lab setup I'm currently working in, just have a look at my lab page.

More posts from ESX Virtualization:

- VMware vSphere 8.0 U1 Announced (NEW)

- VMware vSAN 8.0 U1 What's New? (NEW)

- vSphere 8.0 Page

- Veeam Bare Metal Recovery Without using USB Stick (TIP)

- ESXi 7.x to 8.x upgrade scenarios

- A really FREE VPN that doesn’t suck

- Patch your ESXi 7.x again

- VMware vCenter Server 7.03 U3g – Download and patch

- Upgrade VMware ESXi to 7.0 U3 via command line

- VMware vCenter Server 7.0 U3e released – another maintenance release fixing vSphere with Tanzu

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

- Homelab v 8.0 (NEW)

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)