Today we'll cover another objective from VCP-DCV certification based on vSphere 8.x, and we'll talk about the difference between vSphere standard switches (vSS) and vSphere Distributed Switches (vDS). Chapter after chapter we're getting closer to fill the blueprint objectives and help students to study and pass the VCP-DCV Exam. Today's chapter: VCP-DCV on vSphere 8.x Objective 1.5 – Explain the difference between VMware standard switches and distributed switches.

VMware just released a new certification exam (2V0-21. 23) which it's focusing on the installation, configuration, and management of VMware vSphere 8. You can become certified with a label of VCP-DCV 2023 certified.

Get the latest VCP-DCV 2023 overview PDF from VMware Education. Check the Official VMware VCP-DCV 2023 exam guide (blueprint) here. Check the VMware VCP-DCV 2023 page here.

NOTE: The exam based on vSphere 7.x will be retired the 31. Jan 2024

However, you can still pass the exam based on vSphere 7.x right now.

**************************************************

- The exam duration is 135 minutes

- The number of questions is 70

- The passing Score is 300

- Price = $250.00

**************************************************

I'm using a lab with VMware Workstation software pre-installed.

VCP-DCV on vSphere 8.x Objective 1.5 – Explain the difference between VMware standard switches and distributed switches

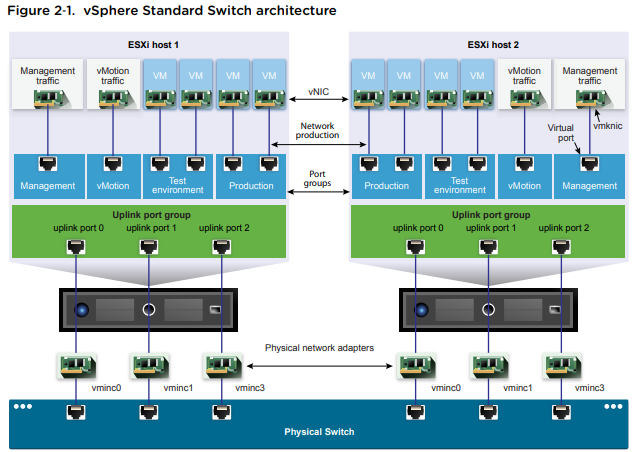

A vSphere Standard Switch is very similar to a physical Ethernet switch. Virtual machine network adapters and physical NICs on the host use the logical ports on the switch as each adapter uses one port. Each logical port on the standard switch is a member of a single port group.

When it is connected to the physical switch using a physical Ethernet adapter also called uplink, you can have a connection between your virtual infrastructure and the physical (outside) world.

vSphere Standard Switch (VSS)

It works much like a physical Ethernet switch. It detects which virtual machines are logically connected to each of its virtual ports and uses that information to forward traffic to the correct virtual machines. A vSphere standard switch can be connected to physical switches by using physical Ethernet adapters, also referred to as uplink adapters, to join virtual networks with physical networks.

This type of connection is similar to connecting physical switches together to create a larger network. Even though a vSphere standard switch works much like a physical switch, it does not have some of the advanced functionality of a physical switch.

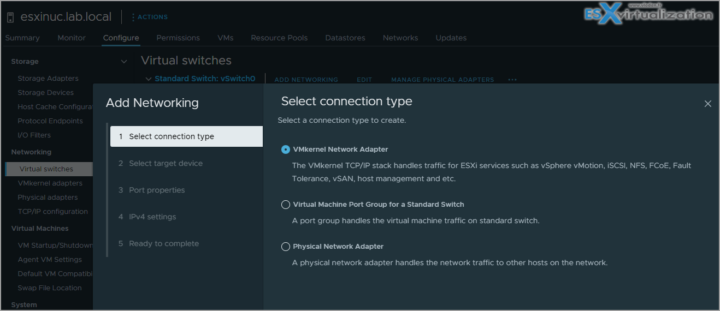

How to create a standard vSwitch?

Select Host > Configure > Networking > Virtual Switches > Add. At the same time, the assistant proposes you to create either VMkernel network adapter, VM port group or Physical network adapter.

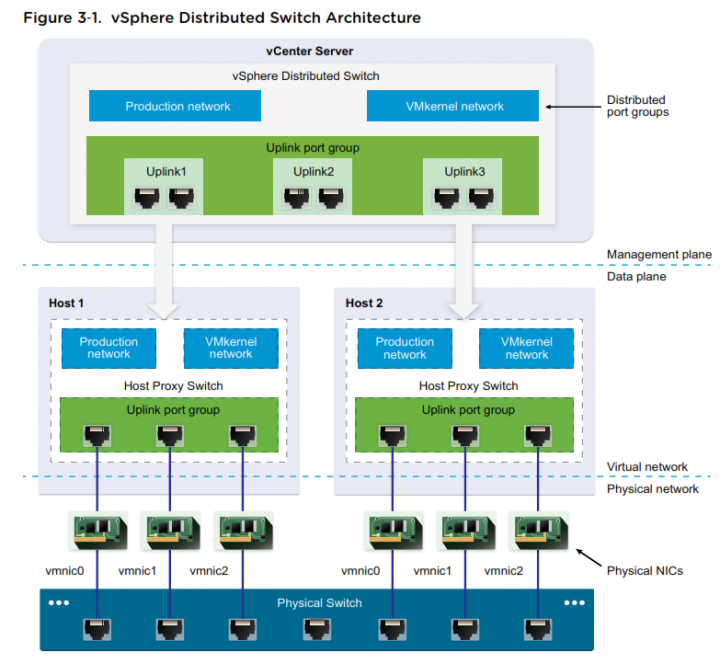

vSphere Distributed Switch

A vSphere distributed switch acts as a single switch across all associated hosts in a data center to provide centralized provisioning, administration, and monitoring of virtual networks. You configure a vSphere distributed switch on the vCenter Server system and the configuration is propagated to all hosts that are associated with the switch.

This lets virtual machines maintain consistent network configuration as they migrate across multiple hosts.

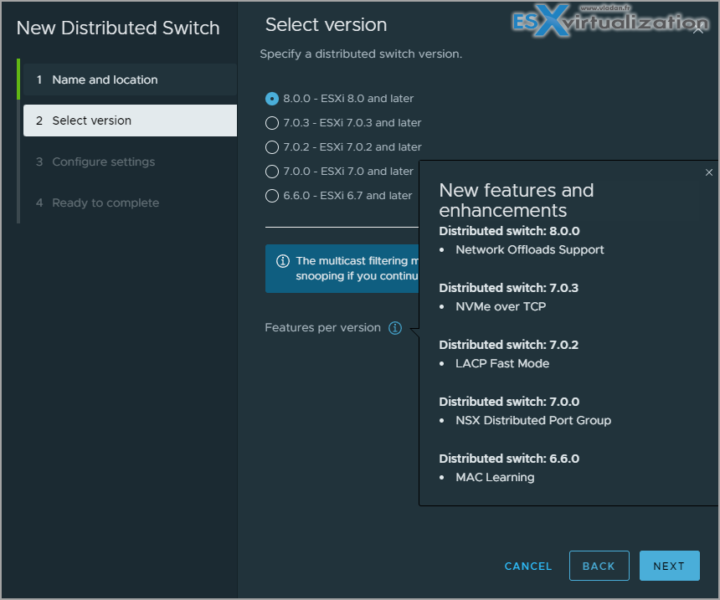

Where to? Right-click Datacenter > Create new distributed switch.

VLAN – VLAN enables a single physical LAN segment to be further segmented so that groups of ports are isolated from one another as if they were on physically different segments. The standard is 802.1Q.

vSphere Standard Port Group – Network services connect to standard switches through port groups. Port groups define how a connection is made through the switch to the network. Typically, a single standard switch is associated with one or more port groups. A port group specifies port configuration options such as bandwidth limitations and VLAN tagging policies for each member port.

Each port group on a standard switch is identified by a network label, which must be unique to the current host. You can use network labels to make the networking configuration of virtual machines portable across hosts. You should give the same label to the port groups in a data center that use physical NICs connected to one broadcast domain on the physical network

vSphere Distributed Port Group – A port group associated with a vSphere distributed switch that specifies port configuration options for each member port. Distributed port groups define how a connection is made through the vSphere distributed switch to the network.

Nic Teaming – NIC teaming occurs when multiple uplink adapters are associated with a single switch to form a team. A team can either share the load of traffic between physical and virtual networks among some or all of its members, or provide passive failover in the event of a hardware failure or a network outage.

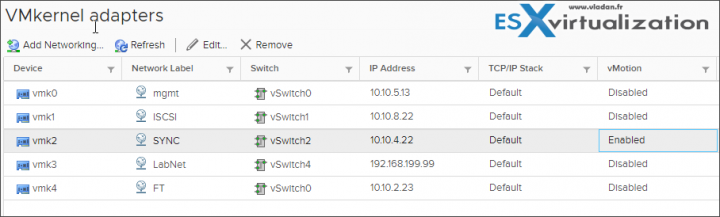

VMkernel port – VMkernel networking layer provides connectivity to hosts and handles the standard infrastructure traffic of vSphere vMotion, IP storage, Fault Tolerance, and vSAN.

Uplink port – ethernet adapter connected to the outside world. To connect with physical networks.

I invite you to read the vSphere Networking PDF for more details. You can find the link to the PDF on the VCP6.7-DCV Study Guide – VCP-DCV 2019 certification page.

Further reading of the document will give you details on:

- Managing networking on multiple hosts on a VDS

- Migrating VMKernel adapters to VDS

- Create VMkernel adapters on VDS

- Use Host as a template to create a uniform networking configuration on VDS

Networking Policies

Policies set at the standard switch or distributed port group level apply to all of the port groups on the standard switch or to ports in the distributed port group. The exceptions are the configuration options that are overridden at the standard port group or distributed port level.

Teaming and Failover Policy – NIC teaming lets you increase the network capacity of a virtual switch by including two or more physical NICs in a team. To determine how the traffic is rerouted in case of adapter failure, you include physical NICs in a failover order. To determine how the virtual switch distributes the network traffic between the physical NICs in a team, you select load balancing algorithms depending on the needs and capabilities of your environment.

NIC Teaming Policy – You can use NIC teaming to connect a virtual switch to multiple physical NICs on a host to increase the network bandwidth of the switch and to provide redundancy. A NIC team can distribute the traffic between its members and provide passive failover in case of adapter failure or network outage. You set NIC teaming policies at virtual switch or port group level for a vSphere Standard Switch and at a port group or port level for a vSphere Distributed Switch.

Load Balancing policy – The Load Balancing policy determines how network traffic is distributed between the network adapters in a NIC team. vSphere virtual switches load balance only the outgoing traffic. Incoming traffic is controlled by the load balancing policy on the physical switch.

Check the vSphere Networking PDF for more details. You'll find more about:

- VLAN policy

- Security policy

- Traffic shaping policy

- Resource allocation policy

- Monitoring policy

- Traffic filtering and marking policy

- Port blocking policy

We simply can't squeeze all the networking knowledge into a single post. Check also:

- Configure policies/features and verify vSphere networking

- Configure Network I/O control (NIOC)

- Troubleshoot vSphere Storage and Networking

Some best practices:

Dedicate a separate physical NIC to a group of virtual machines, or use Network I/O Control and traffic shaping to guarantee bandwidth to the virtual machines.

To physically separate network services and to dedicate a particular set of NICs to a specific network service, create a vSphere Standard Switch or vSphere Distributed Switch for each service. If not possible, separate network services on a single switch by attaching them to port groups with different VLAN IDs.

Keep the vSphere vMotion connection on a separate network. When migration with vMotion occurs, the contents of the guest operating system’s memory is transmitted over the network. You can do this either by using VLANs to segment a single physical network or by using separate physical networks (the latter is preferable).

Find other chapters on the main page of the guide – VCP8-DCV Study Guide Page.

- Homelab v 8.0 (NEW)

- vSphere 8.0 Page (NEW)

- Veeam Bare Metal Recovery Without using USB Stick (TIP)

- ESXi 7.x to 8.x upgrade scenarios

- A really FREE VPN that doesn’t suck

- Patch your ESXi 7.x again

- VMware vCenter Server 7.03 U3g – Download and patch

- Upgrade VMware ESXi to 7.0 U3 via command line

- VMware vCenter Server 7.0 U3e released – another maintenance release fixing vSphere with Tanzu

- What is The Difference between VMware vSphere, ESXi and vCenter

- How to Configure VMware High Availability (HA) Cluster

Stay tuned through RSS, and social media channels (Twitter, FB, YouTube)